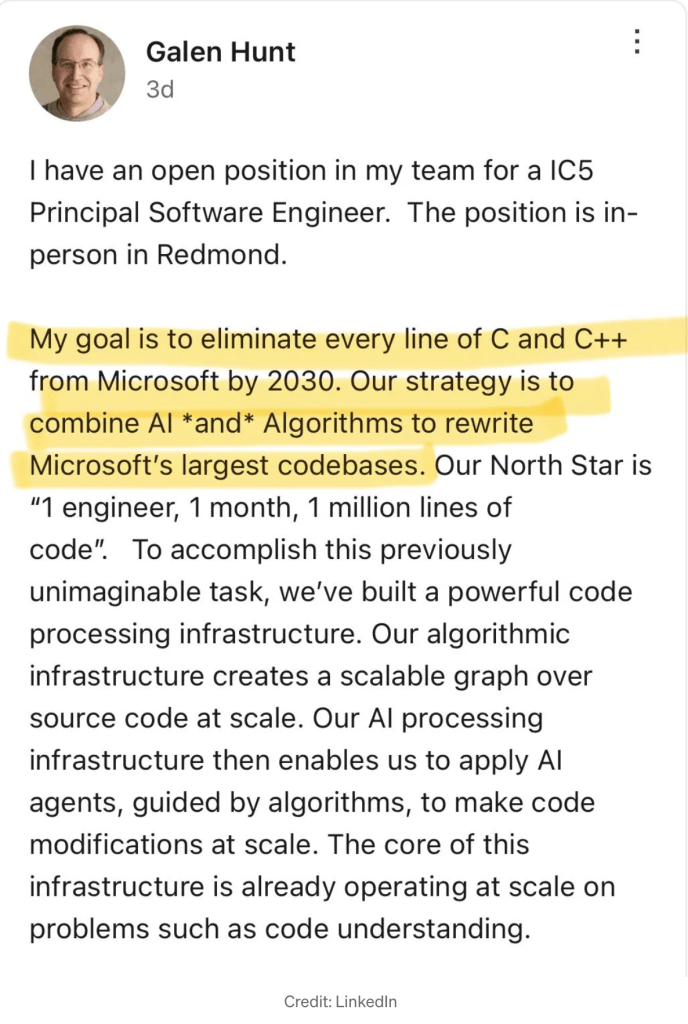

A team at METR Research recently did a study of the effectiveness of AI in software development and got some very surprising results. At the risk of oversimplifying, they report that the developers they studied were significantly slower when using AI, and moreover, tended not to recognize that fact.

METR’s summary of the paper runs to multiple pages–the full paper is longer–so a couple of lines here can’t really do it justice, but the developers on average had expected AI to speed them up by about 24%, and estimated after doing the tasks that using AI had sped them up by about 20%. In fact, on average, it had taken them about 19% longer to solve problems using AI than it did using just their unadorned skills.

My statistics days are behind me, but the work looked to be of high quality, and the people at METR seem to know how do do a study. Surprising though the result may be, the numbers are the numbers.

Yikes! How Can That Be?

Superficially, METR’s results seem wildly at odds with my experience using AI for coding. I used it for a month, and felt that it vastly increased my output, not by a double digit percentage, but by a large factor. Four times? Five times? More? It’s impossible to know for sure, but a lot.

Yet, after reading the study more carefully, not only did it begin to seem like a dog-bites-man story, I came to feel that their results strongly validate my own experience and understanding of AI. If you take the message to be, “AI doesn’t make developers faster“, you are probably missing the point.

(Don’t take my one paragraph summary too seriously. METR’s summary can can be seen here. The full study can also be reached from within that page.)

Continue reading →