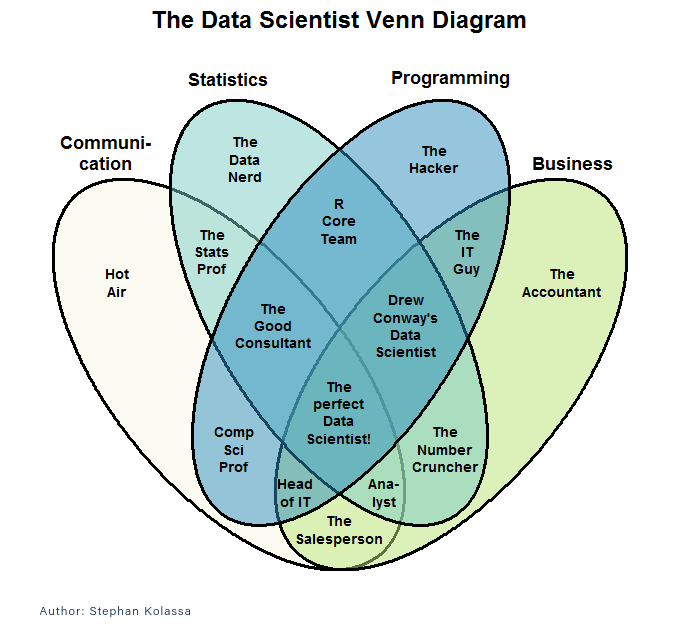

This is the third in a series of posts charting the progress of a programmer starting out in data science. The first post is A Pilgrim’s Progress #1: Starting Data Science. The previous post is A Pilgrim’s Progress #2: The Data Science Tool Kit.

What Is NumPy?

NumPy is a library of high-performance arrays for Python. After this I’m going to mostly call it numpy because that’s the name of the package you import. Whatever we call it, numpy supports creating and manipulating arrays of any number of dimensions and the ability to easily reshape them and slice them in complex ways on the fly.

The elements of any numpy array can be accessed in a variety of ways. You can access single elements, of course, but there is a powerful syntax for accessing all sorts of rectilinear slices in one or more dimensions. We’ll look at some of that below.

As the name implies, numpy is designed to support mathematical computing, and is thus packed with convenient features for operating on data as an array or matrix.

Every programmer is used to iterating over the elements of an array using a loop or an iterator, which is a concept that is easily extended to using nested loops to iterate over multi-dimensional structures. Numpy takes a higher-level approach, emphasizing applying operations to an entire array, rather than merely using an array as a repository for data that will be explicitly operated on by loops in your code. Functionally, the two approaches are of equal power–there’s still a loop going on within numpy, but in practice, applying functions to data structures results in simpler, cleaner code that’s easier to understand. The way I look at it is, code you don’t have to write has the fewest bugs, so the less code the better.

Continue reading