A Crazy Claim

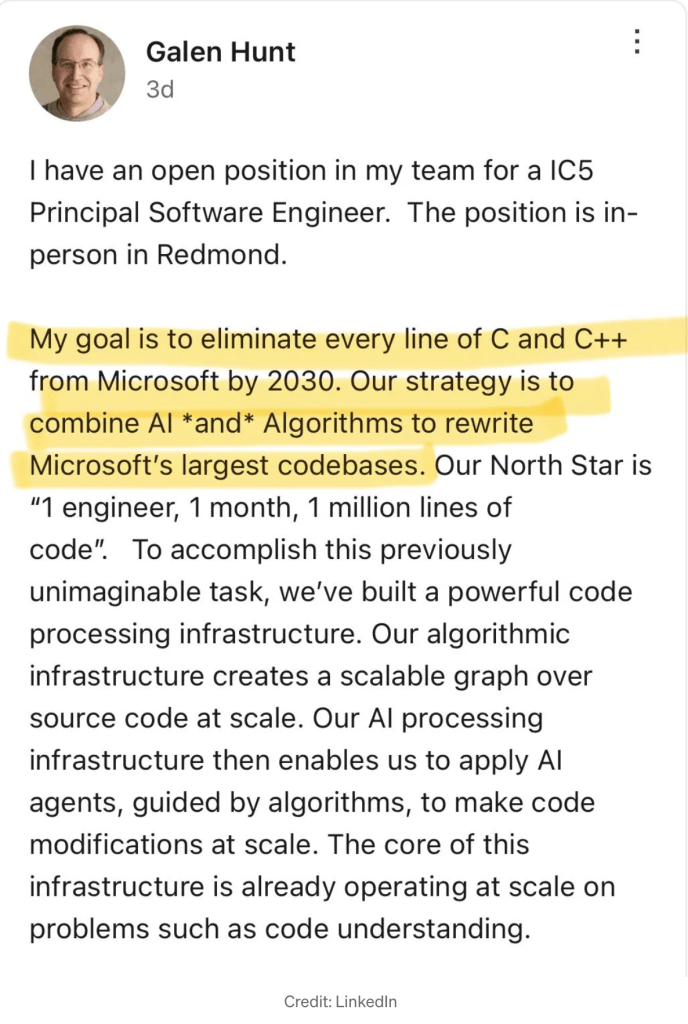

Galen Hunt of Microsoft is assembling a team right now to rewrite their entire product suite, which is currently written in C and C++, by 2030. The stated goal is to code at the rate of a million lines per month per engineer.

Don’t laugh—the goal may be extremely ambitious, but it isn’t obviously insane. There are quantifiable reasons why Mr. Hunt might not be bonkers.

The reasons take some explaining.

The State of The Art

There are still some old graybeard Unix guys who swear AI is an over-hyped fad, and they’re not going to use it. Emacs was good enough for Dad and it’s good enough for me, goddammit.

But I used AI on a major (for me) project, and I’m here to testify that it blows human developers out of the water. Using AI, I wrote 30k lines of highly effective code in a couple of weeks or so. That was six human months ago, and AI advances in dog-years. It would go faster now.

Even today, most of what interns, junior, and mid-level developers do, AI does better, and AI is an order of magnitude faster*. (That asterisk is going to do a lot of work here.) In the time it takes to explain what you want done to a human, you can write it as a prompt. Less, actually, because AI is incredibly good at filling in the gaps in your half-baked prompts. You get the code in seconds, but in big projects, the value is not just that it writes code incredibly quickly—there are deeper reasons for its power.

While I was typing the forgoing paragraph on Wednesday, a superb developer I know texted me: “I don’t write code anymore. I’ve made 30+ PRs since Monday, and I’ve read about 0.1% of the content.” This is a guy who is a master at what he does, a hands-on leader of multiple teams at a technologically sophisticated company.

Yet because AI is a tool and not a brain (at least for now) you still need people with deep understanding to define the tasks AI executes. But I’d call that whiteboard work; it’s not exactly programming.

Management is having a field day—it seems like there is no longer any reason to hire anyone, or even to retain much of your existing coding staff now that AI is in the picture. Nine tenths of programming jobs are dead on their feet now—they are only still standing because it takes a while for organizations to catch up and for technology people to learn to use it effectively. For my own part, I don’t see why I would ever hand-write another function call in any language.

*The Tar Pit Revisited

Paradoxically, the incredible increase in programming effectiveness that I experienced is not the fast part of the power boost from AI that people like Galen Hunt are chasing for mega projects.

The reason is somewhat subtle—a little history first.

Before most of today’s programmers were born, there was a thing called the “software crisis.” In the 1970’s, software engineering had not yet caught up to the advancing power of machines. Programming was rapidly leaving the strictly batch, punch-card, mainframe era behind, and program sizes were exploding. The crisis was that the majority of large projects were failing and were abandoned uncompleted. The chronic failure to deliver software had become a major problem in developed economies.

Fred Brooks, in his classic and still readable, “The Mythical Man Month” (1975), explained why. His argument boils down to a claim that large programs fail because the number of human interactions among members of a team increases quadratically with the number of programmers. One consequence is, in Brooks’ famous words, “Adding programmers to a late project makes it later.”

The reason is that attempting to go faster by adding programmers is a linear process, but it results in a quadratic increase in time that must be spent coordinating everyone’s work.

Today, we no longer hear the phrase “software crisis.” It ended. The solution was the development of a culture of programming that learned to write software in smaller, minimally coupled units that were defined only by their interfaces. Programmer’s tools improved too—IDEs, higher-level languages and debugging, networking, etc, but basically, we learned to write smaller programs that talk to each other.

That’s one way to say it, but to think of the solution as writing smaller programs mistakes the means for the end. Minimizing the number of programmers on a project was the essence. Reducing the number of lines to write, or writing code faster, give a linear speedup, but smaller teams give you a quadratic speedup in the effectiveness of each programmer.

Where The Real Speed Comes From

It took a while to get to the punch line, but it’s this: for realistic AI speedups and team sizes, one programmer who is S times faster because of AI can often beat a team of M programmers who don’t use AI, even when M is larger than S.

Huh? It’s not very intuitive. In English, that means that AI’s inhuman ability to keep stuff in its mechanical head allows one programmer to cover the same project territory that a much larger team of M members would cover without AI. Losing the quadratically increasing communications overhead for M programmers means a lone programmer can often go faster than a team, even of M is much bigger than the boost in coding speed.

This is not true in every possible case, but it’s true over realistic ranges with big teams. We’ll see below that as long as the AI speedup factor is positive, and the communications cost scales superlinearly, there is always a team size for which a lone AI-powered programmer will beat it.

A Real Life Example

The 30k program I recently wrote using AI took about two weeks and change. That means the sustained rate of code generated was about 2000 lines a day of decently structured, readable code that could pass for human written. The AI code was better than my hand-written code usually is.

Critically, there was exactly one programmer involved.

If there had been two of us, productivity would not have doubled to 4k lines per day. It probably would have been more like 3k lines/day, because of the added inter-human coordination overhead.

This is a seat of the pants estimate, but I expect that if there’d been five people on the team, lines/day would have been lower than me working alone.

Theoretically, with sufficient manpower, the project never would have been completed at all.

More Mathematically

Let’s assume that AI can speed up the coding output of a single programmer by a factor of S. To keep things realistic and intuitive, let’s say that S a good size speedup, like 10x.

Call the average number of developers working on a project M and let’s say in this one case, M happens to also be 10. That means our lone AI person can now rewrite the entire project as fast as the M programmers did the first time. Right?

Wrong! The factor of S is how much AI speeds up one programmer.

Greatly simplifying by assuming that every team member talks to every other, the cost of communication in a team of size M grows with kM(M-1)/2, where k ∈ (0, 1) is a fudge-factor expressing the cost of a unit of communication between team members. It’s not an exact formula, but it’s the right kind of curve, and we’ll see below that the values don’t really matter to the basic principle.

Speedup = S / [M – k·M(M-1)/2] Which is equivalent to:

Speedup = S / [M(1 – k(M-1)/2)]

Speedup is greater than one, i.e., a single AI programmer beats M unassisted programmers, i.e., when the numerator exceeds the denominator:

S > M - k·M(M-1)/2

Or equivalently when:

S > M(1 – k(M-1)/2)What this is saying is

- If k=0, i.e if communication were absolutely free, the AI speedup S has to be greater than M for one AI developer to beat a team of M developers.

- If communication has a cost greater than zero, and it always does, the communication overhead eats into the productivity of the M programmers at a rate that is quadratic in M.

- As long as S≥=1 and k>0, there is always a maximum team size team beyond which the lone AI programmer will progress faster than the team.

And at the risk of pushing the model too far, note that if k, the cost of a unit of communication, is too big, the term (1 – k(M-1)/2) becomes negative for teams larger than one. This means that beyond a certain size, adding more team members actually causes progress to be negative, i.e., the project loses ground. If this is too fanciful an interpretation of the model, we can see below that the denominator actually does stay positive for a realistic range of values.

The denominator M(1 – k(M-1)/2) goes negative when:

1 - k(M-1)/2 < 0 k(M-1)/2 > 1 M > 1 + 2/k

For Various Values Of k

k = 0.1 → goes negative when M > 21

k = 0.05 → goes negative when M > 41

k = 0.01 → goes negative when M > 201

k = 0.004 → goes negative when M > 501

A Concrete Example

This is a fairly realistic case. At team of 10 programmers versus a single programmer speeded up to 10x by AI. Communication cost is a tenth of a unit.

Say S=10, M=10, k=0.1:

Team output = 10 – 0.1·45 = 5.5

One AI programmer = 10

Effective speedup = 10/5.5 = 1.82

So the lone programmer is 1.82x faster than the whole team of 10.

The critical thing here is that none of this depends on either the exact values or the particular cost function used here (kM(M-1)/2). Any superlinear communication cost function would produce similar relationships so long as k>0 and S>1. This means it’s a very general principle.

Yet, Management Shouldn’t Be Dancing In The Street

Yippee! Software development is a solved problem now, so we can all go home, right?

Not exactly, because producing software isn’t all programming.

AI may indeed take over most of the coding work. Not may—it surely will. In some places, it already has. But just as a programmer’s life isn’t mostly coding, the total staff that brings software into the world and keeps it there isn’t mostly programmers. Not by a long shot. All kinds of other people are involved, including stakeholders, managers, testers, system administrators, deployment experts, cloud engineers, QA staff, product owners, designers, marketing people, business people, sales teams, building maintenance personnel, receptionists, etc.

I have not found good numbers on this, but anyone who has been in the industry can see that the people actually checking code into source-control are a minority in a business of any significant size. And that brings us to the most consistently disappointing principle in software engineering.

Amdahl’s Law

Amdahl’s famous law applies to optimizations to speed up a program. It is a mathematical expression of the simple idea that no matter how much you optimize some portion of a program, the maximum speedup to the entire system is capped by the proportion of the computing that takes place in the chunk you optimized. It’s obvious as soon as it’s pointed out.

Imagine that your compute-intense program spends three quarters of it’s time in a hardcore 5% of the code. No matter how radically you optimize that critical 5%, you cannot speed up the total program by more than 4x. The general formula follows.

If P is the time you spend in the relevant part of the process, and you speed P up by S:

Speedup = 1 / ((1 - P) + P/S)If you speed up the busy 5% by a big but still plausible factor, say, 10x

Speedup = 1 / (0.25 + 0.75/10) = 1 / (0.25 + 0.075) = 1 / 0.325 = 3.07At 10x, we only tripled the overall speed. Not encouraging. If the speedup that applies to P goes to infinity, the second term in the denominator disappears, yet the total speedup only increases a little more.

Speedup = 1 / (1 - 0.75) = 1 / 0.25 = 4xThis cruel principle has broken programmer hearts for decades. Optimizing even the most critical code is usually disappointing. (Algorithmic changes are another story!)

We usually think of Amdahl’s Law as applying to software, but the principle can be applied to other systems as well.

The law applied to the whole software development process behaves similarly. Speeding up one part of the process can only do so much. The argument for the high-level effectiveness of Microsoft’s ambitious project across the enterprise therefore depends not only on how much AI speeds up coding, but on how much of the total process coding constitutes today.

I’m sure you see where this is going.

Let’s say the actual programming is 25% of the software development process. And let’s say that AI produces a 10x increase in the productivity per developer. And further, let’s say à la Brooks that the radical decrease in team size results in another 10x, for an overall 100x speed increase for development. Plugging these values into the equation we see:

Speedup = 1 / ((1 – 0.25) + 0.25/100) = 1 / (0.75 + 0.0025) = 1 / 0.7525 = 1.329x

In other words, even when coding is almost infinitely fast, software only goes out the door 1/3 faster.

Is That Fair?

Maybe that’s not completely fair. Slow development and slipped schedules, etc., cause the global process to gum up to some degree, so infinitely fast development might speed up the process by more than 1.329x, Or 25% might easily be an overestimate of how much of the process is coding, which would mean the benefit would be less. But either way it’s looking like conversion of all programming to AI might be a significant speedup with commensurate cost savings, but not exactly an economic miracle.

The Pipeline Problem

Still, 1.329 faster is a significant speedup, and dumping an expensive 0.25 of the staff is nothing to sneeze at in dollar terms.

The problem is that senior developers constitute priceless intellectual capital because they not only understand the technology underlying a complex product, they understand where that product fits in world, and equally importantly, they have a feel for the world that is coming down the pike. They are also driven by emotion to think deeply about the purpose of what they are doing, and how to move a massive software business into the future.

And senior people were junior people first.

These things that make these senior people valuable are mostly outside of what AI handles well. but the interns and the junior and mid-level devs are exactly AI’s wheelhouse. Cutting off the junior-to-senior software engineer pipeline is a bit like the medical world realizing it can save a lot of money by dispensing with interns and residents and letting AI handle all the routine cases. It’s true that you would save a ton of money for a while, but where would the next generation of attending physicians come from?

The truly senior people rarely do a lot of coding. Why would they? Architects of buildings don’t lay brick. Automotive engineers don’t work on the factory floor. What they know is what matters, and what they know is still generally beyond the scope of AI, and contrary to hype, AI coverage of it does not seem to be immediately on the horizon.

It’s Not the First Time We’ve Been Down This Road

The situation we will face is similar to the mistake we in the US made with manufacturing over the last several decades, as companies moved production offshore to cut costs. That massive offshoring indeed saved a ton of money, but when you offshore the making of things, you also offshore a tremendous amount of knowledge with it.

Those kinds of skills don’t go extinct overnight. There is a lot of inertia in the system, but in a couple of decades the people with the high-end skills age out and they are no longer replaced from below.

The current administration is making a Quixotic rearguard attempt to “bring back industry” by using tariffs to make domestic manufacture more competitive. It’s an appealing idea, but the chain of industrial engineering and highly skilled crafts that had developed continuously since the early 19th Century was severed thirty or forty years ago when we abandoned most manufacturing.

We originally outsourced to China because Chinese labor was cheap. Chinese labor is not that cheap anymore, but we continue to go to China anyway because after forty years of China being the world’s manufacturing center, that’s where the advanced know-how is. We quite literally could not onshore large categories of manufacturing because we no longer have the tool-and-die makers, mold-makers, and engineers. We don’t even have enough of them to train up new people. It would be a multi-generation project to redevelop the expertise, just as it took China generations to develop their deep bench of expertise.

In other words, when we sent manufacturing overseas we deracinated the higher-level fields of industrial design and product engineering. It says on the packaging that your phone was designed in Cupertino, but is it really designed domestically when the myriad details inside the case can only be designed in Shenzhen, where they actually know how to make it?

What We Face

The radical elimination of programming and software engineering jobs is probably inevitable. The power of AI is just too great, and it comes with some enormous short term side-benefits, particularly in its democratization of the capability to write useful software.

Yet, just as with manufacturing, we are facing a “tragedy of the commons” situation. It is to every manager and CEO’s immediate benefit to use AI to slash development costs to the bone, and to the economic and career benefit every developer of AI who provides the tools to do it, yet it is probably to society’s collective long-term disadvantage, because when we “offshore” software development to AI, we risk cutting off the wellspring of creativity that fuels the engine of progress.

As with offshoring manufacturing, offloading software development to machines is essentially cashing out intellectual capital, rather than allowing it to slowly compound.

Already, young people are fleeing computer science programs in droves because it is correctly perceived as a dead-end career path. But it won’t be just the rank-and-file developers who fly—the brilliant will also seek more promising destinations.

The upside is that ordinary people will be able to afford housing in the Bay Area and in Redmond again.